With the release of the Playdate this year, it has further rekindled my interest in graphics styles of an older era. Or are they just older graphics implementations for the modern age? Even though modern electronics have been able to push 16.7 million colors on high gamut displays for a long time, there is always a market for small, simple, and inexpensive displays. While the 1-bit display on the Playdate delivers a hefty dose of nostalgia (along with a ton of fun), it has been exciting to see what developers have been able to do with limited graphical constraints. This led me back down a deep rabbit hole of to research ancient dithering algorithms and color spaces to create the second version of the Agifier plug-in for Acorn.

What's new with Agifier 2.0

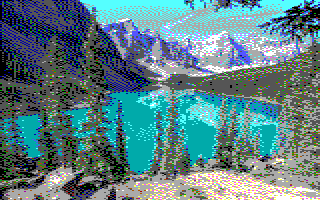

- Implemented Floyd-Steinberg dithering. This was a critical missing piece in trying to simulate a more complete image while using a limited color palette. There are many different dithering algorithms available, but I settled with one of the most famous ones.

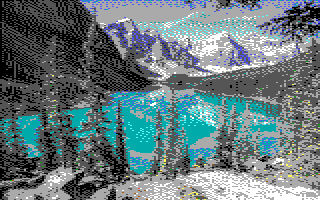

- Experimented with the CIE Lab color space. As Tanner Helland mentions in a blog post, using the CIE Lab color space might work better than just comparing against RGB, but I did not get any better results than using either RGB or my own custom selection method.

Screenshots

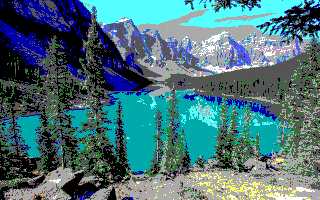

Original Image

Agifier 1.x

Agifier 2.0

Agifier 2.0 using CIE Lab Color Model

Download + Installation

- Download: Download the files directly from GitHub or install via git with the command

git clone https://github.com/edenwaith/Agifier.git - Installation: Copy the

Agifier.acpluginplug-in bundle (found inside the Agifier folder) into~/Library/Application Support/Acorn/Plug-Insfolder on your computer. Create thePlug-Insfolder if it does not exist yet. - Usage: Restart Acorn after copying over the plug-in, then open a file and select the Filter > Stylize > Agifier menu. This will reduce the size of the image and reduce the color palette down to the standard 16 EGA colors that were used in Sierra games.

Future Work

- Continue exploring better selection for colors, especially in regards to green, perhaps experiment with additional color models. Unfortunately, the human's perception of color does not always align with the math of finding the "closest" color, so a lot of the greens get washed out and turn to more muted greys and browns. This is partially due to the human eye being more sensitive to greens, but not as much with blues. I did add another example for trying to select colors (modified Euclidian method), but it didn't seem to help much.

- Use different dithering methods to see if the results come out any better or worse.

- [Far Off Future] Implement Machine Learning (ML) methods to determine the best way to make a proper EGA representation of an image. Perhaps implement checkerboard dithering patterns to simulate certain color areas (in the style of SCI0-era Sierra games of the late 80s).